Applied AI – the application of AI technology in business, is skyrocketing. An Accenture report on AI revealed that 84% of business executives believe that AI adoption would drive their business growth. Applied AI empowers businesses with end-to-end process automation and continuous process improvement for greater productivity and profitability. However, applied AI is like a rose garden. AI-powered business applications are enticing, but you should be aware of the thorns surrounding the flowers. You need to use frameworks such as Responsible AI while embracing AI for your business. You should understand potential risks such as adversarial attacks and data poisoning. Understanding these concepts will help you address common hiccups in AI adoption for business before they choke your initiatives.

Responsible AI

Artificial intelligence is powerful. When used responsibly, AI can be a solution to many problems and change the world. It can be the biggest problem to society when used otherwise. Training AI models on incomplete, faulty data sets leads to biased and inaccurate performance. For example, a biased AI system used in the hiring process can reject applicants based on their gender and race. The unethical use of AI can compromise the privacy of individuals by mishandling sensitive personal data or conducting surveillance without consent. Such instances erode the trust of people in organizations using Artificial Intelligence.

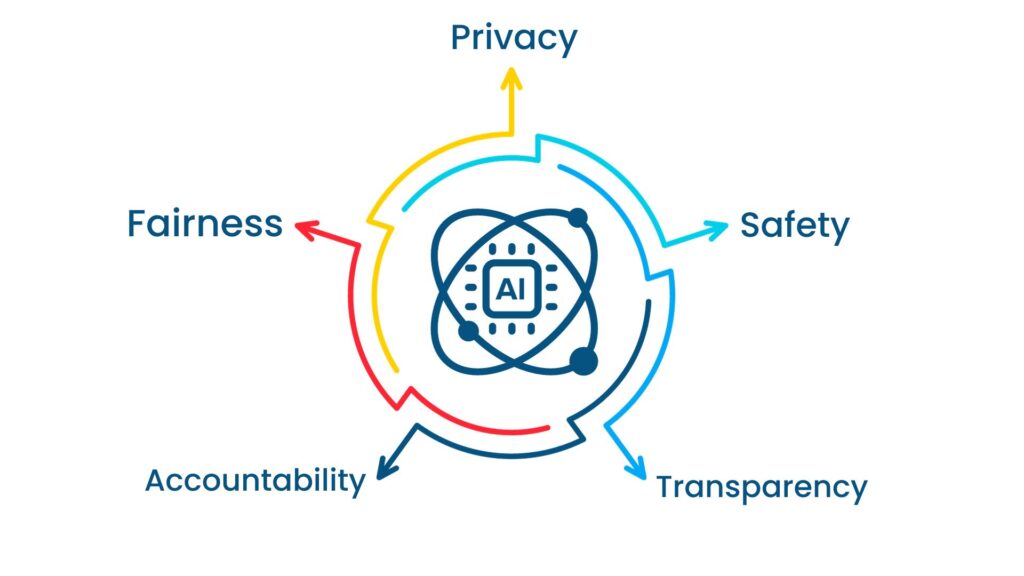

Responsible AI refers to the ethical and responsible development, deployment, and use of AI systems. It includes a set of principles, practices, and guidelines aimed at creating AI systems that align with human values and legal and regulatory compliance. In 2016, tech giants Microsoft, Amazon, Google, IBM, and Meta (then Facebook), came together to create a framework around AI governance. The framework suggests five key principles – fairness, accountability, transparency, privacy, and safety.

Fairness

Fairness refers to the need to ensure that AI algorithms do not discriminate against individuals or groups based on attributes such as race, gender, age, or socioeconomic status. This principle involves designing AI algorithms and data sets in a way that avoids bias and advocates equitable outcomes for all users.

Accountability

Accountability means that there should be clear lines of responsibility and ownership for AI systems and their decisions. Developers, organizations, and users should be accountable for the actions and consequences of AI systems, and mechanisms should be in place to address errors or harms.

Transparency

Transparency involves making the operations and decision-making processes of AI systems understandable and explainable to users and stakeholders. It means providing insights into how AI systems reach conclusions or make predictions, which can help build trust and facilitate human oversight.

Privacy

Privacy in AI pertains to safeguarding individuals’ personal data and ensuring that AI systems handle data responsibly and in compliance with data protection laws. It involves data anonymization, consent mechanisms, and secure data storage and transmission to protect individuals’ privacy.

Safety

Safety in AI focuses on ensuring that AI systems operate securely and reliably. It includes measures to prevent AI systems from causing harm, whether intentionally or unintentionally. Safety also encompasses robustness against adversarial attacks and the ability to handle unexpected situations.

Although these principles can vary from organization to organization. At the end of the day, your AI model should advocate fairness and transparency without bias and inaccuracies.

Explainable AI (XAI)

One way to ensure the implementation of responsible AI is to impart explainability to the AI model. The explainability of an AI model answers some important questions such as – What data does the AI model use? How does the model arrive at its decision? An average human being should be able to interpret these answers. We call this ability of AI systems as explainable AI (XAI).

XAI helps organizations implement AI systems built on the principles of fairness, accountability, and transparency. This will help organizations establish digital trust among their customers. Digital trust is crucial for industries that directly impact customers’ lives. For example, healthcare. Suppose you built an AI model for diagnosis. Doctors wouldn’t be ready to adopt your model until they know how the AI model comes to a conclusion. Because wrong decisions by the AI model can impact the life of a patient and cost a fortune for the doctors. Explainability of your AI model can help you gain the trust of doctors. They can analyze the diagnosis process and use the information to make informed decisions.

Typically, AI models are built in black box format. However, predictions or decisions made by black box models are hard to explain. AI developers themselves find it challenging to analyze the decision process, let alone common business users. On the other hand, white box models offer general data such as:

- The criteria used in decision making.

- Why the model made a particular decision.

- The type of errors the model is prone to, ways to correct the errors.

These insights help you identify adversarial attacks on the AI model. An adversarial attack is an attempt to misguide an AI model through malicious data inputs into making wrong or inaccurate decisions. By looking into the irregular explanations provided by the model for its decisions, you can identify an attack and correct the model. The insights by explainable AI also help you eliminate bias in your AI model.

Adversarial attacks

In Artificial Intelligence (AI)/ ML, adversarial attacks are deliberate attempts to manipulate the behavior of a model by feeding it malicious data. Adversarial attacks exploit vulnerabilities in AI systems, leading to incorrect predictions. They can cause disasters in safety-critical applications, like autonomous vehicles or medical diagnosis. These attacks can also leak sensitive personal information or training data.

Source: [1412.6572] Explaining and Harnessing Adversarial Examples (arxiv.org)

Adversarial attacks can be categorized as white-box or black-box attacks. In a white-box attack, the attacker has access to the architecture and parameters of the target AI model. In a black-box attack, the attacker has limited or no information about the target model. However, the attacker can still craft adversarial examples through trial and error.

An adversarial attacker might want to force an AI model to produce a specific incorrect output. For example, misclassifying an image of a cat as a dog. Such attacks are targeted attacks. In non-targeted attacks, the attacker doesn’t have a specific target in mind, but the goal is to make the AI model produce incorrect output.

Privacy-preserving AI

Privacy-preserving AI refers to the use of artificial intelligence (AI) techniques and technologies while protecting the privacy of individuals and the confidentiality of their data. The primary goal of privacy-preserving AI is to enable AI systems to function effectively and provide valuable insights without compromising the personal or sensitive information of individuals.

Privacy-preserving AI is particularly important in applications dealing with sensitive data. For example, healthcare, finance, and personal assistants. It helps organizations comply with data protection regulations (e.g., GDPR) and builds trust with users who are concerned about the security of their data.

To achieve privacy-preserving AI, you need to ensure the privacy of four key aspects:

- Training data- assuring the privacy of training data helps prevent attackers from performing reverse-engineering on the training data.

- Input – other parties, including model creators, cannot see the input data provided by users.

- Output – make sure the output produced by the model is not seen by others.

- Model – protect the AI model so that attackers cannot steal it.

Data poisoning

Data poisoning is a malicious technique used to manipulate the training data of a machine learning model with the intent of degrading its performance or causing it to make incorrect predictions. In data poisoning attacks, adversaries inject tainted or malicious data points into the training dataset, hoping to influence the model’s learned patterns and decision boundaries. These attacks can compromise the integrity and reliability of AI and machine learning systems.

Attackers can perform data poisoning in several ways, such as by infusing infected data, contaminating algorithms, data manipulation, and logic corruption.

Manipulated AI models can become security risks, as attackers might exploit the models to make incorrect decisions or bypass security measures. Poisoned models might inadvertently reveal sensitive information about individuals, especially if the training data includes personal data. Successful data poisoning attacks can erode trust in AI and machine learning systems, as users may lose confidence in the model’s reliability.

Want to embrace applied AI for your business?

AI adoption for business is a complex process. From choosing the right AI model to managing data to ensuring seamless integration of the model into existing workflows, each step of implementing applied AI for your business requires careful considerations and technical expertise. This is where you need a trusted technology partner like Saxon AI.

With our expertise in applied AI strategy and implementation, we empower businesses to unlock the full potential of AI, optimizing operations and driving innovation. Let us be your guide on this transformative journey towards a smarter, more efficient future. Contact us now.

You can follow us on LinkedIn and Medium to never miss an update.